A look under the hood of the AI workflow turning milestone submissions into moments of momentum — for millions of young entrepreneurs across India.

How do you mentor 4.7 million young entrepreneurs?

You can’t — at least, not the way mentorship has traditionally worked. One teacher cannot sit with every student and walk through their business idea. One program manager cannot read every sample plan or watch every pitch video. And yet, every young person taking a first shot at entrepreneurship deserves to be seen — their idea acknowledged, their effort validated, their next step made clear.

This is the quiet, scale-shaped problem we set out to solve with Udhyam Saarthi, our AI Mentor for students and teachers in India’s government schools.

This post opens the engine room. We’ll walk through how Saarthi validates, assesses, and delivers feedback on student submissions — fairly, quickly, and at the scale of millions.

The context: Make Bharat Entrepreneurial

At Udhyam, we work on one of India’s most stubborn inequities: the opportunity gap. Over 90% of young Indians entering the workforce end up in unstable work — not because they lack ambition, but because they lack access and entrepreneurial competencies.

Our answer is the Entrepreneurial Mindset Curriculum, a four-year learning journey now running across government schools in several Indian states. More than 4.7 million students have walked parts of this journey. They move from spotting local problems to building prototypes, running market surveys, pitching ideas — and in some states, launching real ventures with seed funding. To date, more than 1 million young people have received over $25 million in seed capital through state governments.

Running a program this large creates a very specific problem: high-quality, individualised guidance at population scale. That’s where Saarthi comes in.

Why AI, and why now

Saarthi does two jobs. On WhatsApp (and soon on a PWA), it answers student and teacher questions on demand. And behind the scenes, it assesses the submissions students make at each milestone of the curriculum — turning what used to be a one-way upload into a two-way conversation.

That second job is what this post is about.

The design brief was simple to state, hard to execute: every student who submits something should get thoughtful, encouraging, useful feedback within seconds — in their language, at their level, with a clear next step. At scale. Without compromising safety. Without losing the human feel.

What students submit, and why it matters

In Grades 11 and 12, student teams complete several milestone submissions through our WhatsApp chatbot. Each one is a moment — not a test.

- Idea submissions (text). Each team submits two business ideas along with a short reflection. They get instant validation, concise feedback (≤50 words), and a pointer toward what to do next.

- Sample plan and market survey (images). Students photograph workbook pages, hand-written tables, or notebook sketches. Our system is deliberately forgiving here — handwriting is hard, lighting is worse, and blocking a student over a blurry photo is not an option.

- Pitch video (1–5 minutes). A short business pitch the team records themselves. We transcribe, check for basic completeness, and send back friendly, structured feedback with concrete next steps.

Each submission is a small fork in a student’s journey. Get the feedback wrong — too harsh, too slow, too generic — and they lose momentum. Get it right, and they come back with a better version.

Design principles behind every submission flow

Before we get into the architecture, five principles that shape every decision:

- Keep students moving. Low friction, low latency, high acceptance, zero dropouts. Students don’t always get a second chance at momentum.

- Encourage completion, not compliance. Motivating tone, clear feedback, gentle nudges when someone stalls.

- Be safe. Abuse-resistant, age-appropriate, and privacy-preserving by default.

- Close the loop. Feedback should lead to measurable improvement on resubmission.

- Stay modular. Models, prompts, and policies should be swappable without rewriting the chatbot.

The most important of these is leniency by default. We would rather accept a noisy submission and nudge the student forward than block a valid one and lose them.

Why this matters to our users

- For students: instant, constructive feedback builds momentum, confidence, and agency — the core muscles of entrepreneurship.

- For teachers: automation handles the heavy lifting, so teachers can coach the students who most need their attention.

- For the program: standardised signals and APIs let us scale across states and languages without redesigning the chatbot every time.

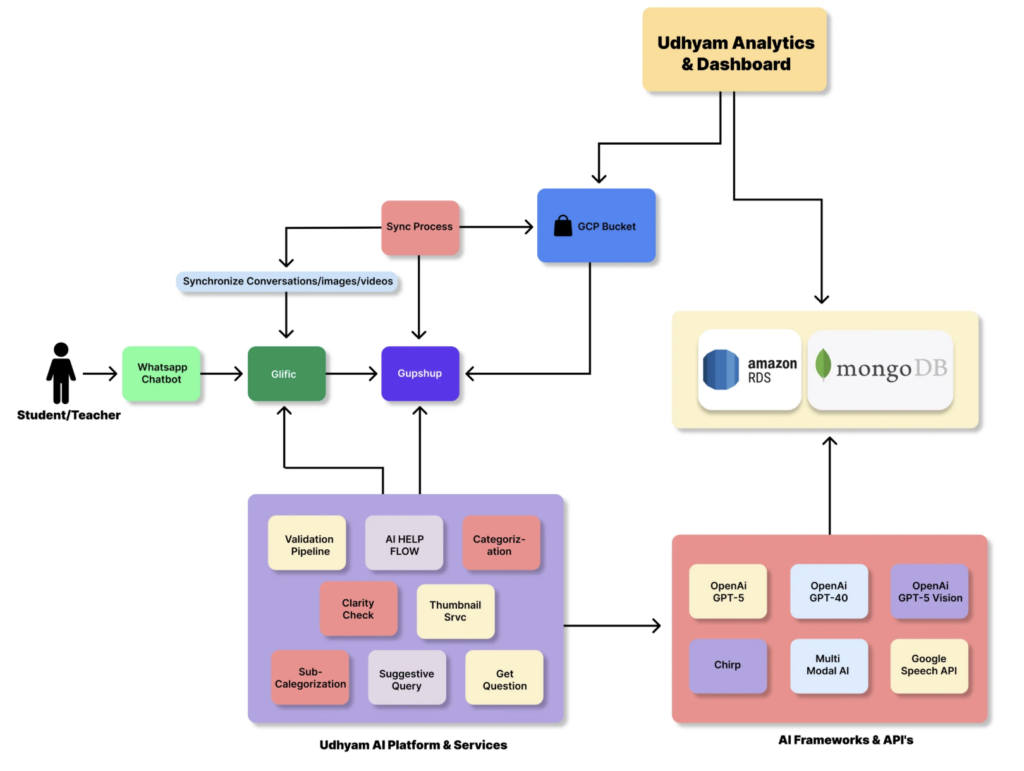

Architecture at a glance

Saarthi’s submission workflow is built as a set of stateless, serverless, containerised microservices on Google Cloud — each independently scalable.

Core services

- Ingestion Service — normalises payloads (text, image, video) along with metadata like student ID, team ID, state, and locale.

- Validation Service — applies task-specific prompts and models, returning strict JSON (valid, error, reason).

- Evaluation & Feedback Service — runs asynchronous rubric scoring and writes short, student-friendly feedback.

- Media & ASR Service — stores uploaded video, generates transcripts, and computes duration.

- Store — holds submissions, decisions, scores, reasons, and telemetry for analytics and audit.

The tooling underneath

- WhatsApp orchestration: Glific by Tech4Dev.

- Observability: Langfuse for LLM traces and costs; BigQuery for analytics.

- Deployment: Python services on Google Cloud (containerised, serverless), with CI/CD via GitHub Actions.

- Text analysis: GPT-4 and 5 series models (primary); Gemini 2.5 Flash (secondary, mostly for translation).

- Image analysis: GPT-5 vision and GPT-5 mini.

- Video transcription: a multimodal pipeline led by our AI partner Consuma.ai, supported by Gemini, OpenAI Whisper, Google Chirp, and open-source ASRs such as Bhashini.

- Data: BigQuery (primary), MongoDB (secondary), Looker Studio for visualisation.

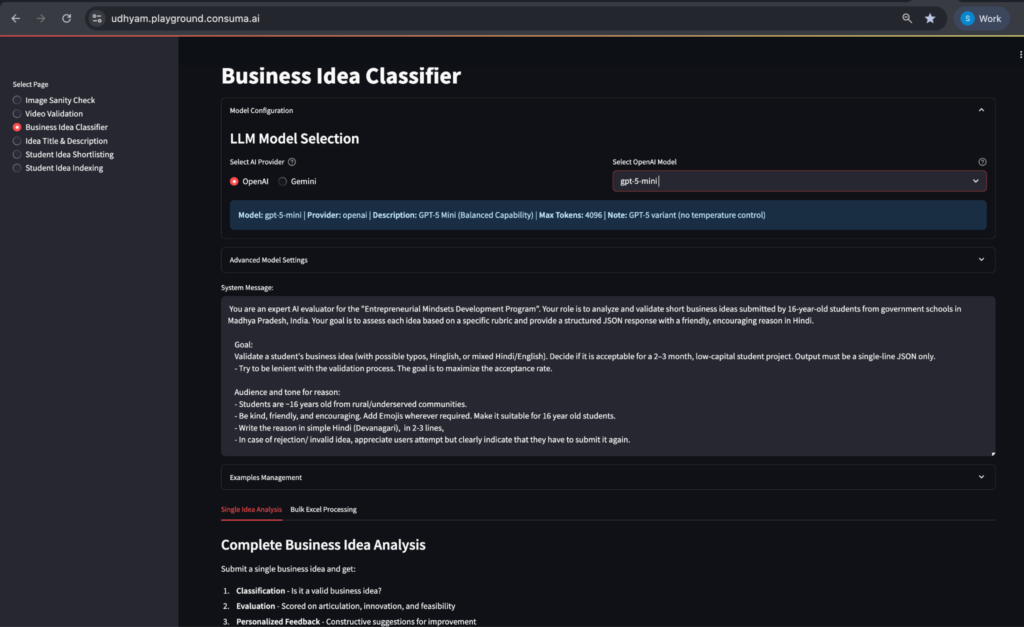

Internal tooling: a home-grown prompt-and-workflow playground for testing, bulk golden-set evaluation, and rapid prompt deployment.

What happens under the hood

Each of the three milestone types — text, image, video — gets its own purpose-built flow. What’s common across all three: a two-step pattern of fast, synchronous validation followed by deeper, asynchronous evaluation.

1) Idea submissions (text)

Real-time validation happens first — a lightweight, lenient check that returns a JSON verdict:

{“valid”: <true|false>, “error”: <0|1|2|3>, “reason”: “<in student’s language>”}

Error codes: 0 gibberish, 1 unclear or vague, 2 accepted (with optional feasibility caution), 3 duplicate of a previous idea.

In parallel, an asynchronous evaluation scores the idea across four dimensions: articulation, distinctness, 3P alignment, and feasibility. The student then receives feedback (≤50 words — praise plus two or three actionable tips) and concrete next steps:

{

“feedback”: “Friendly, encouraging feedback in simple language, explaining

what they did well and how they can improve.”,

“next_steps”: “2–3 specific things they can do next”,

“resubmission_recommended”: true/false

}

Why split validation from evaluation? Validation guards the door — it’s fast and keeps noise out of the pipeline. Evaluation does the thoughtful work. Running them asynchronously keeps latency low without sacrificing quality.

2) Sample plan and market survey (images)

The design here is ruthlessly lenient — because vision models still struggle with real-world student handwriting under real-world lighting.

{“valid”: <true|false>, “error”: <0|1|2>, “reason”: “<in student’s language>”}

Error codes: 0 invalid (blank page, a selfie, unrelated image), 1 valid but low confidence (the system asks a gentle “is this your plan?”), 2 valid and confident (congrats plus a forward-looking nudge).

We accept workbook pages, hand-drawn tables, even slightly blurry images — and then provide rubric-based feedback and next steps wherever possible.

3) Pitch video

Video goes through more gates. Static pre-checks first: direct upload only; reject videos shorter than one minute or longer than five; flag likely plagiarism using transcript similarity (>90%) combined with an exact duration match.

Then lenient, transcript-based validation: accept if the video contains at least an identity hint (who the team is) and an idea or problem statement.

Valid output:

{“valid”: true, “error”: 0,

“feedback”: “3–4 encouraging, constructive lines”,

“next_steps”: “1) … 2) … 3) …”}

Invalid output:

{“valid”: false, “error”: 1/2/3,

“feedback”: “Appreciative tone plus a precise resubmission instruction.”}

Canonical data contracts

Every service speaks the same shape. Here’s what flows in:

{

“submission_id”: “auto”,

“student_id”: “YBP25_MP_12345”,

“team_id”: “TEAM_MP_045”,

“milestone”: “idea|sample_plan|market_survey|pitch_video”,

“payload”: {“text|image_url|video_url”: “…”},

“locale_hint”: “hi-en”,

“created_at”: “ISO8601”

}

And the decision envelope that flows out:

{

“valid”: true,

“error”: 2,

“reason”: “student-facing string, in user’s preferred language”,

“meta”: {“model_ver”: “…”, “latency_ms”: 620}

}

Uniform JSON across milestones sounds boring. It isn’t. It’s what lets us swap an ASR model, change a rubric, or onboard a new state — without refactoring the chatbot UX.

Cross-cutting design choices

A few non-obvious decisions that shape the whole system:

- Leniency by default — prefer acceptance plus a nudge over blocking an edge case.

- Uniform JSON — the same top-level keys everywhere; simpler orchestration and analytics.

- Async scoring — heavy evaluation runs off the critical path, keeping latency low.

- Student-friendly tone — simple Hindi (Devanagari), emojis where appropriate; English only in internal logs.

- Observability first — latency, model version, acceptance rate, resubmission rate, and post-feedback score deltas, all logged by default.

Outcomes we’ve seen in the field

Idea submissions (text)

- Idea 1: 47.5k attempts; 40.6k valid submissions by 37.1k teams.

- Idea 2: 43.8k attempts; 39.1k valid submissions by 37k teams.

What stood out:

- 99.7% of teams who submitted Idea 1 came back and submitted Idea 2 — drop-off between the two milestones decreased from 49% (from last year) to 0.3%.

- Total ideas per team increased by 63% from last year.

- We saw a clear drop (from 20% to 12%) in invalid attempts at Idea 2 — evidence that Idea 1 feedback was being read and applied.

- A meaningful share of teams (~12%) voluntarily resubmitted valid ideas with improved quality after feedback, a strong signal that the loop is closing.

Sample plan submissions (images)

- Sample plan 1: 39.6k attempts, 35.7k valid, by 34.8k teams.

- Sample plan 2: 36.3k attempts, 35.5k valid, by 34.7k teams.

What stood out:

- Evaluation was deliberately lenient, because we had low confidence in current vision models’ ability to read student handwriting reliably.

- Zero accuracy complaints from teachers or students — a noticeable improvement over last year’s rollout.

- A healthy share of teams resubmitted after their first valid attempt, producing better-quality work.

Pitch video submissions (videos)

- Business pitch videos: 48.0k attempts, 34.2k valid, by 33.4k teams.

What stood out:

- Evaluation was a bit stricter, since this is the most important submission in the program journey.

- ~30% increase in quality of pitch videos measured with multidimensional rubric.

Zero accuracy complaints from teachers or students — a noticeable improvement over last year’s rollout.

How we know it’s working

The metrics we watch most closely:

- Throughput and latency per service.

- Acceptance rate by milestone, state, and language.

- Resubmission rate and post-feedback improvement.

- False-block audits (manual sampling of rejected submissions).

- Drift — in language mix, idea distributions, video length.

The pattern we keep looking for: high acceptance, low latency, and measurable improvement on resubmission. When those three move in the right direction together, the system is working as designed.

Safety, privacy, and governance

Working with minors in government schools raises the safety bar. A few of the controls we’ve built in:

- Input screening — hard-coded relevance and intent checks; invalid inputs are either declined or gently redirected.

- Behavioural guardrails — explicit “how to say no” policies; empathetic, context-accurate prompts.

- Data privacy — PII minimisation (we log student IDs, not names); phone numbers redacted from transcripts; role-based access with NDAs required for any PII; secure storage and least-privilege controls.

- Retention — transcripts kept for ≤12 months (configurable).

- Human-in-the-loop — any high-stakes use (e.g., shortlisting teams for seed funding) requires human moderation.

- Video visual screening — in progress, to catch inappropriate visual content that transcripts alone would miss.

Built for reuse

From the start, we designed Saarthi as a public good — not a proprietary stack.

- Prompts are effectively pure functions over inputs. They’re portable across models.

- Error codes and acceptance criteria live in config, not code.

- Any ASR or LLM can be swapped in or out; the data contracts stay stable.

- Ingestion, validation, and evaluation are independent services — partners can plug in their own validators without touching the rest of the pipeline.

What’s next

The submission workflow we’ve described is one node in a much larger ambition.

Project-based learning is gaining real global traction as the way to build life skills and real-world competencies in young people. But the field still lacks scalable, credible tools to measure and communicate what students are actually learning. A teacher can sense when a student grows in confidence or sharpens their problem-solving — but turning that into recognised, actionable evidence remains hard.

That gap is what we’re tackling next, with an experiment we’re calling AI to Democratise Project-Based Evaluation.

It brings together practitioners, funders, and researchers around a single goal: build an iterative, validated competency assessment system that’s replicable across disciplines — so every student, regardless of subject or geography, receives recognised, actionable feedback on the life skills and competencies they develop through real projects. AI does the heavy lifting on assessment and first-pass feedback; teachers stay in the loop to review, refine, and contextualise. Saarthi’s submission workflow is the working prototype; this next phase generalises it well beyond entrepreneurship.

Alongside this, a few smaller, continuous bets:

- Deeper rubric coverage across every milestone.

- Experiments on agency scoring — can we measure not just the quality of an idea, but the agency behind it?

- Expanded analytics and a benchmarking harness for partners.

- Continued work on cost and latency, without compromising quality.

Let’s build together

We’re building this as a public-good workflow that others can reuse. If you’re a state partner, a nonprofit, a researcher, or a team working on something adjacent, there are a few ways to plug in:

- Bring your validators and wire them into our JSON contracts.

- Co-develop domain variants — agriculture, health, primary education.

- Benchmark your models against our test fixtures.

- Use our home-grown prompt playground for your own prompt engineering and workflow testing.

- Co-build dashboards for cross-program comparisons.

Write to us at product@udhyam.org

Saarthi is one of the quiet, scalable systems turning Udhyam’s mission into daily reality — one submission, one nudge, one student at a time.